First there was CI/CD. Now there's Continuous Quality.

AI made writing code cheap. Verifying it is still expensive.

A developer used to be the bottleneck. Now AI agents ship features faster than any team can review, test, and validate them. And the problem isn't just volume. A coding agent sees code. It can't see what happens when that code runs against your database, hits a third-party API, or encounters real user behavior in production.

That's the gap where bugs survive code review, pass unit tests, and detonate at 3am. So the loop looks like this: prompt, code, check, find a bug, re-prompt. Repeat. Engineers burn hours babysitting AI output instead of building.

The problem isn't writing code anymore. It's trusting it.

A world where your prompt is the source of truth

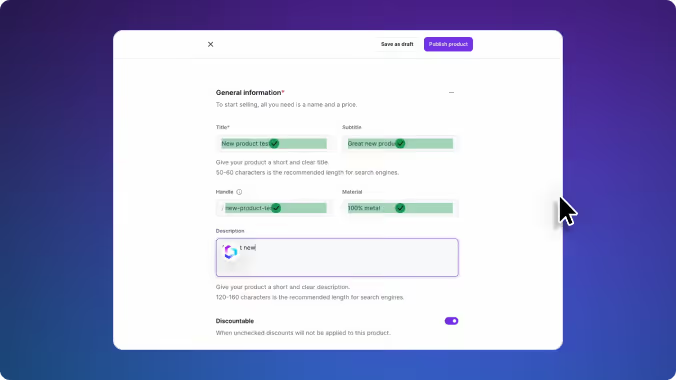

Today, developers write code and hope the tests catch what's wrong. The vision is different: a developer enters a prompt, an AI agent writes the code, and Checksum verifies it automatically. By the time an engineer looks at the output, it has already been executed and validated. No re-prompting loop. No manual test runs. Code that's ready to ship. This is what it means for quality to keep up with AI.

This is Continuous Quality.

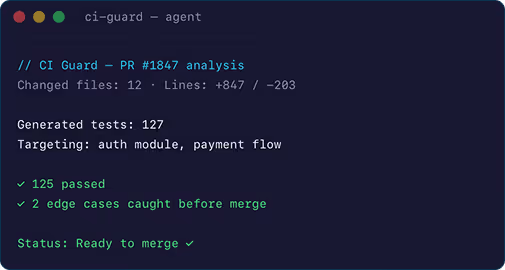

Coding agents need a quality agent to match

AI tools have made every developer 10x more productive. But the problem isn't volume. It's visibility. A coding agent sees code. What it can't see is what happens when that code runs against your database, your APIs, your configurations, and the unpredictable patterns of real users. That gap is where production incidents live. We call it the Context Void, and it's structural, not a model capability problem. The solution is the same one that made autonomous vehicles possible: a world model. For self-driving cars, that means simulating physics, traffic, and weather. For software, it means simulating databases, APIs, configs, and real user behavior. Checksum builds that world model from real runtime signals, so the quality loop runs between two AI agents, not between an AI agent and an exhausted senior engineer.

Three stages of continuous quality

Test

The Code World Model. A simulation of your production environment built from real runtime signals, so the interactions between databases, APIs, configs, and real user behavior get tested before anything ships—not discovered in production at 3 a.m.

Fix

Generate and maintain coverage across every layer (E2E, API, and per-PR), continuously and autonomously. No manual effort required.

Prevent

A simulation of your production environment that catches what code review and unit tests can't: the interactions between databases, APIs, configs, and real user behavior that only surface at runtime.

Checksum is building the quality layer for autonomous engineering.

See where it starts.